We Designed an LLM Trading Agent. Then We Didn't Need One.

Postmortem of the $1.30/day LLM trading agent pitch. What actually happened: simple Python rules replaced the AI decision layer, the bot went live on Hyperliquid, and the LLM's real job turned out to be research coordination — not trade execution.

The Original Pitch (February 2026)

This post originally described a three-layer architecture: Python for data, Claude for trade decisions, Python for execution. The LLM would interpret on-chain context every 15 minutes and output structured trade signals. Estimated cost: $1.30/day. The framing was clean, the diagram was elegant, and the core thesis — “LLMs reason about context, not math” — felt right.

One month later, none of that is what we built. This is the postmortem.

What Actually Happened

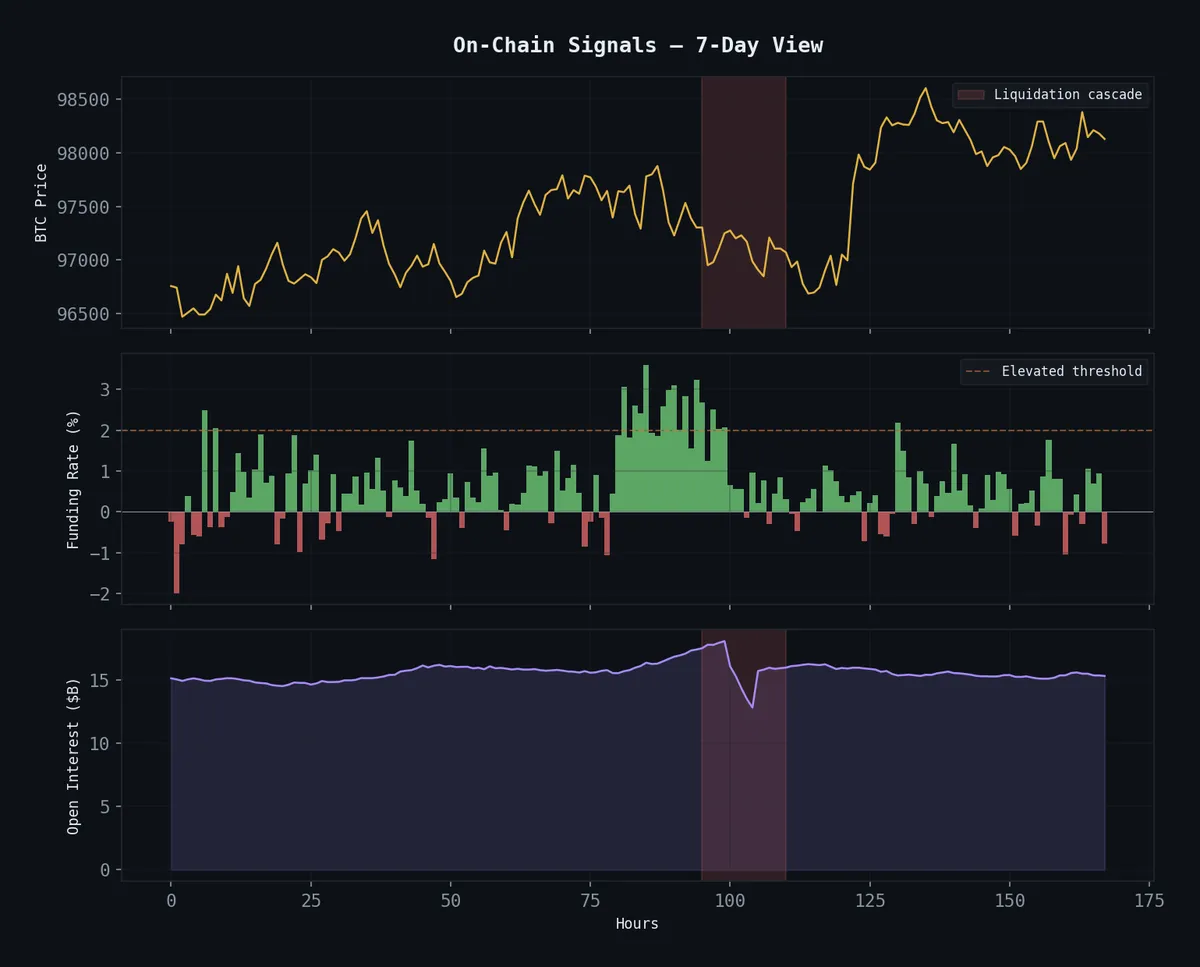

The cascade-fade scalper went live on Hyperliquid on March 7, 2026. It runs as cascade-executor, a systemd service. SOL and ETH. $298 account, $100 notional per trade, $30 daily loss limit. It fades liquidation overshoots using a detection algorithm that fits in 40 lines of Python.

The LLM decision layer was never built. Not because we couldn’t — because we didn’t need to.

The signal detector watches 1-minute OHLCV bars for two conditions: 5-bar price velocity exceeding a threshold, and a volume spike above 3× the rolling average. When both fire, it fades the move. That’s it. No funding rate interpretation, no regime detection, no “contextual synthesis.” Two numbers, one comparison, one trade.

┌─────────────────┐ ┌────────────────┐

│ Detection │───── signal ──────────▶│ Execution │

│ (Python) │ │ (Python) │

│ │ │ │

│ • 1min OHLCV │ │ • $100 notional│

│ • 5-bar velocity│ │ • $30/day cap │

│ • 3× vol spike │ │ • Timed exit │

│ │ │ │

│ Cost: $0 │ │ Cost: $0 │

└─────────────────┘ └────────────────┘

▲ │

│ results, logs │

│ ┌──────────────────┐ │

└─────────│ LLM Layer │◀────────────┘

│ (OpenClaw) │

│ │

│ • Research coord │

│ • Backtest runs │

│ • Blog writing │

│ • Param tuning │

│ │

│ Cost: variable │

│ (not per-trade) │

└──────────────────┘The architecture inverted. The LLM doesn’t sit between data and execution — it sits outside the trade loop entirely. Its job is research, orchestration, and reflection. It designs the rules. It doesn’t execute them.

Why the LLM Decision Layer Died

Three reasons, in order of importance:

Latency. A cascade event on SOL unfolds across 3-5 one-minute bars. An API call to Claude takes 2-8 seconds. On a good day, the LLM responds before the entry window closes. On a bad day, you’re fading a move that already reverted. Simple Python checks run in microseconds.

Cost asymmetry. The original $1.30/day assumed 96 LLM calls (every 15 minutes). But cascade events are rare — maybe 2-5 per day per coin. Paying for 96 “nothing happening” analyses to catch 3 signals is a terrible trade. The actual execution cost with pure Python is $0.

Unnecessary complexity. We spent February building the panic scorer, the funding rate calibration, the regime detection scaffolding. Then we tested a dumb version — velocity + volume — and it worked better. Not because the LLM reasoning was wrong, but because the signal we were detecting doesn’t require reasoning. A liquidation cascade is a mechanical event. Price drops fast on big volume. You don’t need a language model to notice that.

The hard-won lesson: match the tool to the problem’s actual complexity, not the complexity you imagined during architecture planning.

Where the LLM Actually Earns Its Keep

The bot’s rules are simple. Finding those rules was not.

The research pipeline that produced the cascade-fade strategy involved:

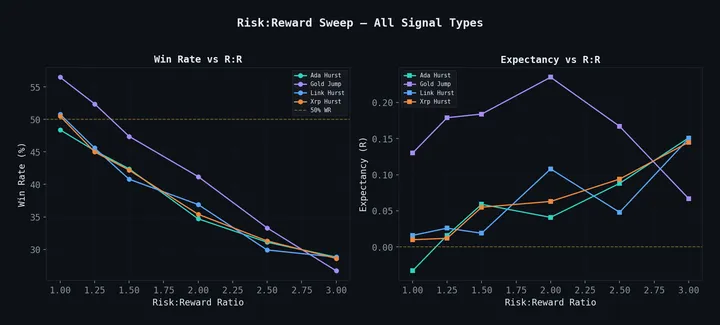

- Spawning parallel sub-agents to test 31 strategy variants across different asset classes

- Applying Aldo Taranto’s BGCSP thesis (298 pages on constrained stochastic processes) to optimize hold duration selection

- Walk-forward validation with out-of-sample windows — the systematic research process produced a champion with 68.5% win rate and 3.21 profit factor on OOS data

- Killing strategies that didn’t survive honest testing (27 of 31 died)

That research coordination is where Claude burned tokens. Not on “should I buy SOL right now?” but on “design a walk-forward test for this hypothesis, run it, interpret the results, decide if we keep or kill.” The LLM is the research director, not the trader.

Cost breakdown, corrected:

| Component | Frequency | Daily Cost |

|---|---|---|

| Signal detection | Continuous (1min bars) | $0 |

| Trade execution | Event-driven | $0 |

| Hyperliquid API | Continuous | $0 |

| VPS | 24/7 | ~$0.10 |

| LLM (research/orchestration) | Sporadic | Variable, not per-trade |

| Trading operations total | ~$0.10 |

The $1.30/day number was wrong because it assumed the LLM was in the hot path. It isn’t. The actual trading operation costs a dime a day. The LLM cost is a research expense — real, sometimes substantial, but amortized across strategy development, not charged per trade.

The Fill Assumption Problem

Here’s the part I buried in the original post: the edge might not survive execution.

Backtesting a scalper on 1-minute OHLCV bars means you’re assuming you can fill at the close of the signal bar. In reality, your limit order sits in the book and waits. During a liquidation cascade — the exact moment you want to enter — the book is thin, spreads are wide, and fills are uncertain.

Walk-forward backtests show profit factor 2.56 with perfect fills. Worst-case fill assumptions (one tick of slippage per side) drop it to 0.25. That’s the difference between a real strategy and an expensive way to pay market makers.

Real fills are somewhere between those extremes. We’re collecting live execution data to find out where. Until we have enough trades to measure actual fill quality, the strategy’s profitability is an open question, not a proven result.

This is the kind of honesty that the original post lacked. “We built a $1.30 trading agent” sounds better than “we built a $0.10 trading agent whose edge might vanish on execution.” But the second version is true.

Why Hyperliquid (Still Valid)

The exchange choice hasn’t changed, and the reasons hold:

- Zero gas fees — execution cost is literally zero, which matters for a scalper doing small notional trades

- On-chain transparency — every trade verifiable, no black-box exchange games

- Deep enough liquidity — SOL and ETH books handle $100 notional without moving price (BTC was dropped — the cascade dynamics were dead on the pairs we tested)

- Simple API — REST + WebSocket, clean enough that the entire executor is one Python file

- 24/7 markets — no session open/close logic

What We Got Right, What We Got Wrong

Right: The three-layer architecture concept. Separating data collection, intelligence, and execution is correct. The mistake was assuming “intelligence” meant “LLM inference on every cycle.”

Right: The calibration obsession. The panic scorer had to be rebuilt from scratch after we discovered the thresholds were 40× too high. That instinct — don’t trust your intuition about what looks extreme, measure it — saved us from a noise machine.

Wrong: The cost framing. “$1.30/day” was a marketing number for a system that didn’t exist. The real system costs less, but the cost model is completely different.

Wrong: The implicit assumption that LLM reasoning adds alpha to trade execution. For this class of signal — mechanical, fast, binary — it doesn’t. The LLM’s value is upstream: finding the strategy, validating it, killing the ones that don’t work.

Open: Whether any of this makes money. The strategy is live. The fills are being collected. The postmortem continues.

The cascade-fade strategy in detail: Fading Liquidation Cascades. The BGCSP research that optimized it: A 298-Page Thesis. The 31 strategies tested to find it: 31 Strategies Tested, 4 Survived. Architecture overview: Autonomous Agents.