Markets Are Languages, Not Physics

Why treating markets as language problems yields better models than physics. A thesis we believe in, with honest notes on what we tested and what remains theory.

The Wrong Metaphor

Quantitative finance stole from physics for 50 years. Brownian motion. Stochastic differential equations. Black-Scholes is literally the heat equation wearing a suit.

Fine for pricing. Fine for risk. Useless for prediction.

Particles don’t panic. Gravity doesn’t flinch when Jay Powell swaps one word for another in a press conference. Markets are made of narrative-addicted, herd-following primates producing sequential signals. The best framework for modeling them was never thermodynamics. It’s linguistics.

The Renaissance Clue

The most successful quant fund in history wasn’t built by finance people. Renaissance hired cryptographers, speech recognition researchers, and computational linguists. Robert Mercer came from IBM’s speech recognition team. Peter Brown did machine translation.

They didn’t hire economists. They hired people who understood pattern recognition in sequential data — which is what NLP is.

The public record suggests they treat market data as a language: a stream of symbols (price movements, volume patterns, order flow) with statistical regularities that function like grammar. You don’t need to know why a word follows another to predict it. You need the statistical structure. The word “the” is almost always followed by a noun or adjective. That’s not understanding. That’s pattern recognition. And it works.

This insight shaped everything we tried to build. Whether it was the right insight is a different question. We’ll get there.

Markets as Token Sequences

The mapping between language and markets is tighter than it looks:

- Words/tokens → Price bars, volume, order flow. The atomic units of market speech.

- Grammar → Market microstructure. What sequences are legal.

- Sentences → Trading sessions. Bounded chunks with internal logic.

- Context → Regime. The same pattern means different things in different macro environments. “Bank” near a river vs “bank” near money.

- Semantics → Fundamental value. The meaning beneath the squiggly line.

- Pragmatics → Trader intent. What the market said vs what it meant.

- Register → Volatility regime. Formal English vs drunk texting. Compressed ranges vs limit-down chaos.

GPT doesn’t know what a cat is. It uses the word correctly because it learned the regularities. A market model doesn’t need to understand why prices move. It needs to learn how they move — the grammar of price action.

This is either a profound insight or a seductive analogy. We spent months finding out which.

Tokenization: The Proof of Concept That Stayed a Concept

We played with discretizing OHLCV bars into composite tokens — encoding direction, magnitude (normalized by ATR), relative volume, and volatility regime into symbols like P12V3R2. Converting continuous price action into a finite vocabulary.

Still sitting in a notebook somewhere. Never made it to production. The idea is sound — if you squint at what Renaissance probably does, some version of this is in the stack — but “the idea is sound” and “it makes money” are separated by a canyon we haven’t crossed.

Perplexity as a Regime Detector (Still Hypothetical)

The most interesting output of a language model isn’t the prediction — it’s the perplexity. How surprised is the model by what it’s seeing?

Applied to markets: high perplexity = the market is behaving unlike anything the model has seen. A regime change detector that doesn’t need you to specify what changed. The perplexity spike just tells you something shifted.

We hypothesized that rolling perplexity on tokenized price sequences would spike before major volatility events. Adjacent to our entropy collapse work — entropy measures information content of returns directly, perplexity measures how unexpected the sequence of states is.

Honest status: we never built a working n-gram perplexity model. The ECVT signal it was supposed to complement is dead. This is a thesis we find compelling, not a result we can cite. The distinction matters.

LLMs as Context Layers

If markets are languages, LLMs should be useful. Not as crystal balls — as readers.

We explored this with our LLM trading agent. Feed it quantitative signals and market context, let it assess whether conditions favor a trade. The LLM doesn’t predict price. It reads the room.

Long-range dependencies? LLMs handle those. Multiple data types at once? Native. Probability distributions as output? Built in.

The architecture makes sense. The track record doesn’t exist. We never deployed it live. The concept paper writes better than the P&L statement, and anyone who’s been in this game knows that’s a red flag shaped like a thesis.

Where the Metaphor Breaks

Three fractures. They matter more than the elegance.

Non-stationarity. English grammar doesn’t mutate quarter to quarter. Market grammar does. A model trained on 2020 data is speaking a dead language by 2025. We’ve seen this — strategies that work on one pair, one timeframe, one epoch, and nothing else. The past tense doesn’t predict the future conjugation.

Adversarial speakers. Language doesn’t have speakers actively trying to make you misparse the sentence. Markets do. When enough participants learn a pattern, it gets arbitraged out or weaponized against latecomers. Your edge has an expiration date. English doesn’t.

Reflexivity. Predicting the next word doesn’t change what the word will be. Your trade is part of the price. The observer contaminates the observation. Soros built an entire philosophy on this. It’s the deepest crack in the metaphor, and the one nobody has a clean answer for.

What Actually Survived

Time for the uncomfortable part.

The language-as-markets thesis shaped our thinking. It’s why we reached for entropy, sequence models, information-theoretic signals. Compelling framework. Most of what it inspired is dead:

- FVG — gap-fill physics is real (78% fill rate), but you still lose money. OHLC inflated profit by 4×. Buried.

- ECVT — entropy collapse predicted volatility magnitude on EURUSD hourly. One pair, one timeframe. Obituary written. Gone.

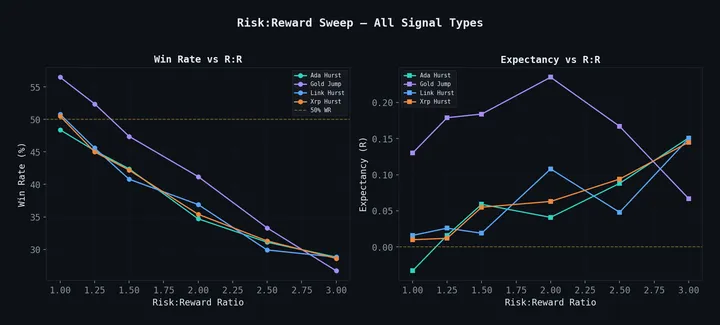

- Hurst — promised regime detection, delivered lies. Classified 100% of crypto bars as trending. Pathological.

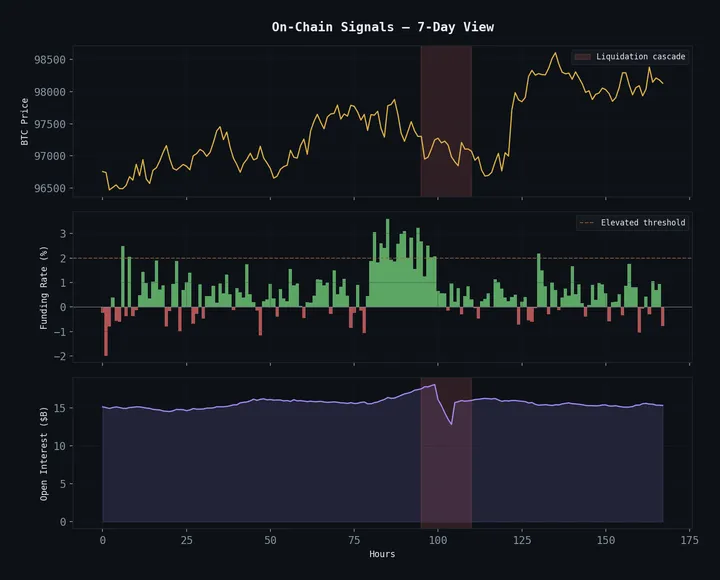

- Cascade Fade — alive. 1-3 minute holds on crypto liquidation overshoots. The one survivor doesn’t even lean on the language metaphor. It’s pure microstructure mechanics.

Four inspired, three dead, and the one that lived has nothing to do with the thesis. That’s a 0% hit rate for the framework as a direct strategy generator.

But here’s the thing — the framework isn’t wrong. Markets are more like languages than physics. The analogy does point you toward better tools (sequence models, information theory, pattern recognition over differential equations). The mistake was expecting the metaphor to generate alpha directly instead of using it as a compass.

Compasses don’t dig gold. They point you toward the mountain. You still have to mine.

Markets aren’t physics. They aren’t efficient random walks. They aren’t deterministic systems waiting to be solved. They’re messy, evolving, reflexive, narrative-driven sequences produced by imperfect agents under pressure.

Calling them languages is the best metaphor we’ve found. We just haven’t figured out how to read them profitably through it yet. The graveyard keeps growing. The compass stays in the bag.

The body trail: FVG Magnetism (a funeral), Entropy Collapse (a death notice), Hurst Exponents (catching a liar), and 38 Strategies Tested (the full graveyard).